On AI, prompts, and the strange new asymmetry between two high-dimensional minds

Yesterday I read a piece by Joshua Leigh, “The Prompt is Not an Interface,” and it stopped me in my tracks. Leigh argues that decades of interaction design were spent moving away from typed commands toward direct manipulation — toward seeing and pointing and dragging — and that with AI we have retreated, all at once, to the very paradigm those researchers spent their careers escaping. For tasks that are inherently visual or spatial, he writes, the text prompt forces a translation that loses signal at every step. I found this clarifying. I also found myself walking around for the rest of the day with a quieter, neighbouring question that his essay had loosened in me.

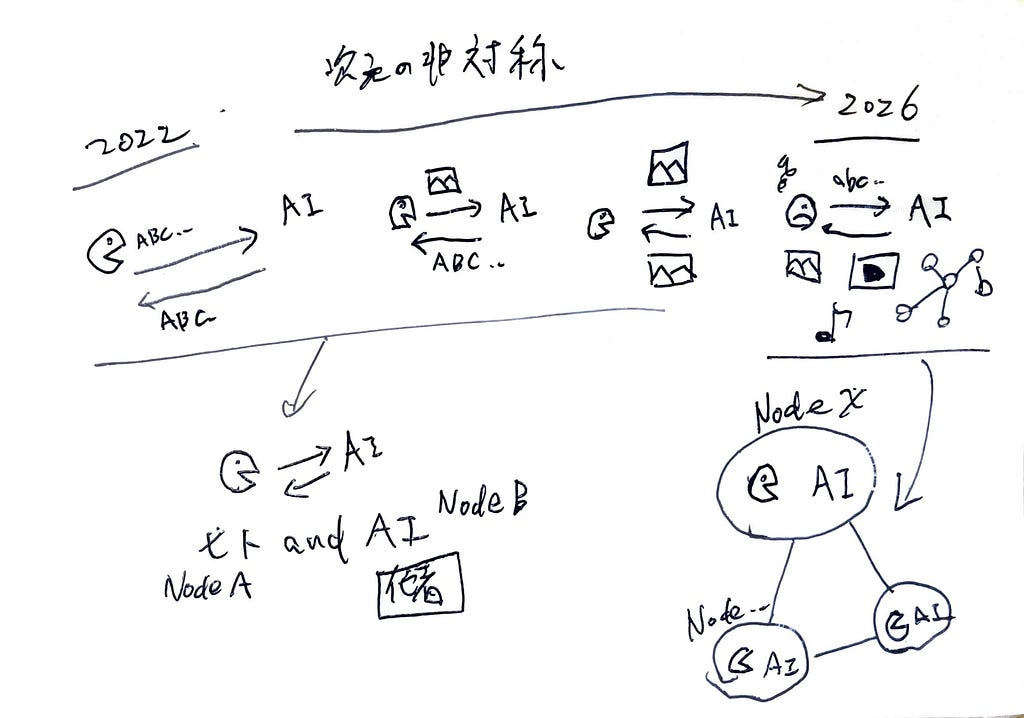

The question is this: why didn’t we feel this strain in 2022? And why are people starting to feel it so sharply now?

What follows is one attempt at an answer. It is offered alongside Leigh’s piece, not in place of it. I want to ask what changed, and what it might be asking of us.

Where humans communicate without strain

Let me start with a vocabulary I will lean on throughout. A *node*, in the way I will use the word here, is simply a point capable of processing information — receiving it, transforming it, passing it on. You are a node. So is your closest collaborator. So, increasingly, is the AI on the other side of your screen. Between any two nodes runs a *channel*: the medium through which information actually moves. Sentences. Gestures. Sketches. Tone of voice.

Among humans, we rarely feel that channels are inadequate for nodes. This is not because we are talented. It is because we have spent thousands of years building channels of many different shapes for many different kinds of thought.

An architect needs to convey the spatial reality of a building that does not yet exist, and so reaches for the plan, the elevation, the section — a multidimensional channel that words could not carry. A choreographer needs to show a dancer the shape of a movement, and so demonstrates it, body to body. The humans who painted the walls of El Castillo needed to leave something of their seeing behind, and reached for ochre and the curve of a cave wall — a channel older than language and, in some ways, still unsurpassed. A child needs to tell their parent how scared they are, and crawls into their lap without saying anything at all. Leigh, in his own essay, gathers a set of examples from architecture, animation, and design — what he calls the *visual pre-language*. The architectural drawing, the storyboard, the thumbnail sketch.

Across all these examples, there is a quiet principle at work. The dimensionality of the channel matches the dimensionality of what the nodes are trying to share. Spatial thought travels through spatial media. Bodily thought travels through bodies. Linguistic thought travels through language, and travels well — Leigh is careful to note that text prompts are excellent for linguistic tasks. The strain disappears when the channel is sufficient for the nodes it joins. We do not always have the skill to use a given channel well, of course. Drawing is hard. So is dancing. So is writing. But the channels themselves are there, and they fit.

When the prompt felt fine

Now think back to late 2022, or — if you live in Japan as I do — to the spring of 2023, when ChatGPT first arrived in earnest.

Almost no one I knew, including me, complained about prompts. The text box felt natural. We typed things in, the model typed things back, and the experience was, on the whole, delightful. People shared screenshots. They wrote about how to phrase questions better. They marvelled at the surprise of being understood.

Why did it feel fine?

Because what was on the other end of the channel was, more or less, a producer of text. Its outputs lived in the same medium as its inputs. The fun of those early months was largely the fun of compression and expansion in a single dimension — how much of a complicated, multidimensional thought could you fold into a paragraph, and how much could the model unfold out of a paragraph in return? The channel was a one-dimensional pipe, and the node at the other end was also, in effect, one-dimensional. There was no asymmetry to feel.

The other end kept widening

And then, quietly and quickly, the other end stopped being one-dimensional.

First, it learned to make a chart from a description, and we were amused. Then it learned to generate images, and we were astonished. Then audio, and then video, and then 3D, and then to read images we showed it, and then to navigate browsers and run code, and then to act in long agentic loops, combining several of these modalities at once. The node on the other side of the screen acquired, dimension by dimension, a working command of nearly every medium humans have ever used to think.

None of this happened in a single dramatic announcement. It accumulated. And somewhere along the way, without anyone marking the moment, the two nodes on either end of the channel became roughly comparable in the dimensionality of thought each could handle.

The asymmetry we now feel

This is the moment, I think, at which the prompt began to feel narrow.

It is not that the channel itself changed. The text box is still mostly the text box. Some of it has grown: you can attach an image now, paste a screenshot, drop in a PDF. But our main means of telling the model what we want still leans on the one-dimensional prompt. What changed is what sits at the other end. We are now reaching, through that mostly-one-dimensional channel, toward something that can — or appears to — hold and produce thought in many dimensions at once.

Leigh’s structural failure — text prompts losing signal on visual and spatial tasks — is, I think, one face of this larger asymmetry. The channel is doing fine when both ends agree to live inside language. It strains the moment one end of the channel can act, or appear to act, almost everywhere else as well, and the other end (us) has to keep funneling everything through words.

This, I want to suggest, is what the feeling people have started to recognize — “there has to be a better way to do this” — actually is. It is the recognition that two high-dimensional minds are reaching for each other through a passage built for a simpler conversation.

From a good other to a node in the same system

And I have started to wonder whether this is not merely a quantitative change — more dimensions on the far side — but something quieter and more interesting underneath: a change in the kind of relationship we are forming with the thing on the other end.

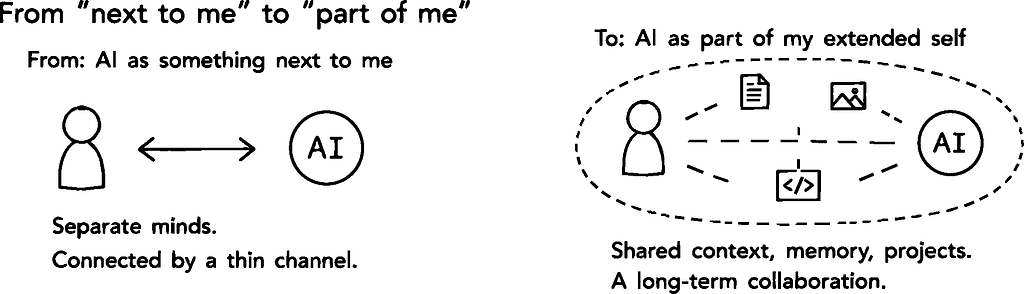

Until recently, AI sat in the position humans have always reserved for other capable beings: collaborator, mentor, contractor, oracle. A *good other*. With good others, narrow channels are tolerable. You and I can do real work together with nothing but a telephone line, because we are two complete minds occasionally pinging each other across a thin connection. The work happens inside us. The channel just synchronizes it.

Something subtly different seems to be happening now. People talk about their AI as part of their thinking, not adjacent to it. They build long-running collaborations with personalized models that know their work, their voice, their projects. The sense of self extends, the way it has always extended in close teams or families or communities, into the surrounding nodes. A football team on a good night is one system; the players, briefly, are something like neurons in it. Companies and communities are places where individual minds dissolve, partially, into a larger information-processing system.

If AI is starting to be experienced not as a good other but as a node inside the same system as us, then narrow channels stop being tolerable. Inside a single system, neurons need rich connections to each other, not telephone lines. The frustration with the prompt is, on this reading, the first ache of a system trying to integrate against an interface designed for two separate parties — for two nodes, us and the AI, that have begun to handle high-dimensional thought together.

What the flesh-and-blood node is for

If this is right, then the obvious next question is: what does the human node do inside such a system? Leigh, in his essay, addresses the channel — how to widen it so that visual and spatial thought can pass through. The other node, the AI, will keep getting more capable in its own way; that is not in doubt. But what about us?

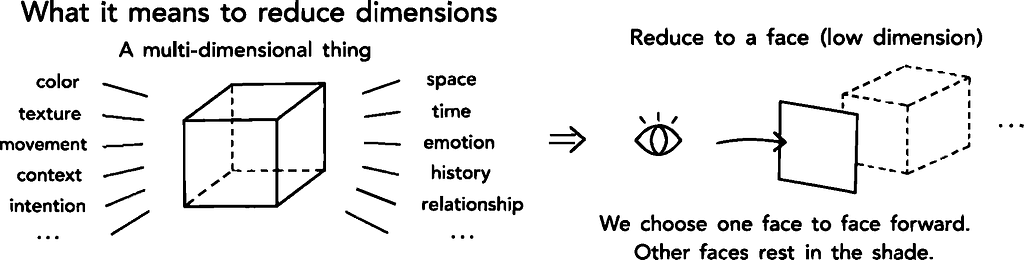

One answer that keeps returning to me is this: at least for now, the distinctive work of the human node, inside a human-AI system, is to govern the dimensionality of information in ways the other node cannot yet quite match. To decide what should be flattened into a one-dimensional sentence and what should be kept multidimensional. To know when a feeling needs to stay a feeling, when a relationship needs to stay a relationship, when a place needs to stay a place. To integrate information, somewhere inside a not-yet-understood mass of protein, in ways that are neither fully reproducible nor fully rational — and to trust that integration anyway.

To translate something into a lower dimension, it is worth noting, is not the same as throwing the rest away. It is to choose which face of the multidimensional thing to turn forward, and let the other faces rest in shadow for now. Done well, this is itself a craft. Done carelessly, it is the quiet loss of half of what we know.

So part of the human node’s work is to let some things rest where they are, without rushing to translate or transmit them. And, when translation into a lower dimension cannot be avoided, to do it with care and with respect for what is being translated. Much of what design actually is, I have come to believe, lives in these two small disciplines.

This shows up, in practice, as a series of small refusals to flatten. A prayer kept ritual rather than scripted. A festival kept embodied rather than streamed. *On the small island where I live, the songs of thanksgiving to the gods are still passed down through recordings of voices, not through written scores — a score would lose what makes the song a song.* A street kept walkable rather than navigable. A friendship kept patient rather than messaged.

And inside that work, there is a region that, for the moment at least, no other node in any conceivable system seems able to take over from us. It is the region of intuition, of aesthetic judgment, of ethics held in the body before it is held in argument, and of what anthropologists, following Claude Lévi-Strauss, have called *magical thinking* — not the dismissive sense in which we usually hear those words, but the sense Lévi-Strauss explored: a coherent, structured way of knowing the world that runs alongside scientific rationality rather than beneath it. Flesh-and-blood thinking has access to that region in a way that nothing made of weights and tokens, no matter how high-dimensional, currently does.

Some part of our job, then, is to keep doing this strange internal work — converting and recombining information inside ourselves, often in ways we cannot fully explain — and to bring to the channel only what is asked for, only what fits.

To close

So — to come back round to where this started — under Leigh’s structural argument I think there is also a quieter story about us. We used to live alongside AI through a thin channel, and that was fine, because we were two separate worlds. Now we are starting, however haltingly, to live inside the same system as it. The prompt is the first part of that arrangement to feel uncomfortable, because narrow channels are wrong for nodes that have begun to handle high-dimensional thought together.

The channel will widen — Leigh is already pointing the way. What I find myself most curious about is the smaller, harder question on the other side: what should pass through the wider channel, and what should be kept, lovingly, on our side of it.

This essay, of course, is itself a one-dimensional posture. It turns one face of the tangle of AI, information, and design forward, and lets many other faces rest in shadow. I would be grateful for other angles, other foregroundings, other quiet additions — wherever you find yourself reading this. Thank you for reading.

The one-dimensional pipe between two high-dimensional minds was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.

On AI, prompts, and the strange new asymmetry between two high-dimensional mindsby Hiroshi SatoYesterday I read a piece by Joshua Leigh, “The Prompt is Not an Interface,” and it stopped me in my tracks. Leigh argues that decades of interaction design were spent moving away from typed commands toward direct manipulation — toward seeing and pointing and dragging — and that with AI we have retreated, all at once, to the very paradigm those researchers spent their careers escaping. For tasks that are inherently visual or spatial, he writes, the text prompt forces a translation that loses signal at every step. I found this clarifying. I also found myself walking around for the rest of the day with a quieter, neighbouring question that his essay had loosened in me.The question is this: why didn’t we feel this strain in 2022? And why are people starting to feel it so sharply now?What follows is one attempt at an answer. It is offered alongside Leigh’s piece, not in place of it. I want to ask what changed, and what it might be asking of us.Where humans communicate without strainLet me start with a vocabulary I will lean on throughout. A *node*, in the way I will use the word here, is simply a point capable of processing information — receiving it, transforming it, passing it on. You are a node. So is your closest collaborator. So, increasingly, is the AI on the other side of your screen. Between any two nodes runs a *channel*: the medium through which information actually moves. Sentences. Gestures. Sketches. Tone of voice.Among humans, we rarely feel that channels are inadequate for nodes. This is not because we are talented. It is because we have spent thousands of years building channels of many different shapes for many different kinds of thought.An architect needs to convey the spatial reality of a building that does not yet exist, and so reaches for the plan, the elevation, the section — a multidimensional channel that words could not carry. A choreographer needs to show a dancer the shape of a movement, and so demonstrates it, body to body. The humans who painted the walls of El Castillo needed to leave something of their seeing behind, and reached for ochre and the curve of a cave wall — a channel older than language and, in some ways, still unsurpassed. A child needs to tell their parent how scared they are, and crawls into their lap without saying anything at all. Leigh, in his own essay, gathers a set of examples from architecture, animation, and design — what he calls the *visual pre-language*. The architectural drawing, the storyboard, the thumbnail sketch.Across all these examples, there is a quiet principle at work. The dimensionality of the channel matches the dimensionality of what the nodes are trying to share. Spatial thought travels through spatial media. Bodily thought travels through bodies. Linguistic thought travels through language, and travels well — Leigh is careful to note that text prompts are excellent for linguistic tasks. The strain disappears when the channel is sufficient for the nodes it joins. We do not always have the skill to use a given channel well, of course. Drawing is hard. So is dancing. So is writing. But the channels themselves are there, and they fit.When the prompt felt fineNow think back to late 2022, or — if you live in Japan as I do — to the spring of 2023, when ChatGPT first arrived in earnest.Almost no one I knew, including me, complained about prompts. The text box felt natural. We typed things in, the model typed things back, and the experience was, on the whole, delightful. People shared screenshots. They wrote about how to phrase questions better. They marvelled at the surprise of being understood.Why did it feel fine?Because what was on the other end of the channel was, more or less, a producer of text. Its outputs lived in the same medium as its inputs. The fun of those early months was largely the fun of compression and expansion in a single dimension — how much of a complicated, multidimensional thought could you fold into a paragraph, and how much could the model unfold out of a paragraph in return? The channel was a one-dimensional pipe, and the node at the other end was also, in effect, one-dimensional. There was no asymmetry to feel.The other end kept wideningAnd then, quietly and quickly, the other end stopped being one-dimensional.First, it learned to make a chart from a description, and we were amused. Then it learned to generate images, and we were astonished. Then audio, and then video, and then 3D, and then to read images we showed it, and then to navigate browsers and run code, and then to act in long agentic loops, combining several of these modalities at once. The node on the other side of the screen acquired, dimension by dimension, a working command of nearly every medium humans have ever used to think.None of this happened in a single dramatic announcement. It accumulated. And somewhere along the way, without anyone marking the moment, the two nodes on either end of the channel became roughly comparable in the dimensionality of thought each could handle.The asymmetry we now feelThis is the moment, I think, at which the prompt began to feel narrow.It is not that the channel itself changed. The text box is still mostly the text box. Some of it has grown: you can attach an image now, paste a screenshot, drop in a PDF. But our main means of telling the model what we want still leans on the one-dimensional prompt. What changed is what sits at the other end. We are now reaching, through that mostly-one-dimensional channel, toward something that can — or appears to — hold and produce thought in many dimensions at once.Leigh’s structural failure — text prompts losing signal on visual and spatial tasks — is, I think, one face of this larger asymmetry. The channel is doing fine when both ends agree to live inside language. It strains the moment one end of the channel can act, or appear to act, almost everywhere else as well, and the other end (us) has to keep funneling everything through words.This, I want to suggest, is what the feeling people have started to recognize — “there has to be a better way to do this” — actually is. It is the recognition that two high-dimensional minds are reaching for each other through a passage built for a simpler conversation.From a good other to a node in the same systemAnd I have started to wonder whether this is not merely a quantitative change — more dimensions on the far side — but something quieter and more interesting underneath: a change in the kind of relationship we are forming with the thing on the other end.Until recently, AI sat in the position humans have always reserved for other capable beings: collaborator, mentor, contractor, oracle. A *good other*. With good others, narrow channels are tolerable. You and I can do real work together with nothing but a telephone line, because we are two complete minds occasionally pinging each other across a thin connection. The work happens inside us. The channel just synchronizes it.Something subtly different seems to be happening now. People talk about their AI as part of their thinking, not adjacent to it. They build long-running collaborations with personalized models that know their work, their voice, their projects. The sense of self extends, the way it has always extended in close teams or families or communities, into the surrounding nodes. A football team on a good night is one system; the players, briefly, are something like neurons in it. Companies and communities are places where individual minds dissolve, partially, into a larger information-processing system.If AI is starting to be experienced not as a good other but as a node inside the same system as us, then narrow channels stop being tolerable. Inside a single system, neurons need rich connections to each other, not telephone lines. The frustration with the prompt is, on this reading, the first ache of a system trying to integrate against an interface designed for two separate parties — for two nodes, us and the AI, that have begun to handle high-dimensional thought together.What the flesh-and-blood node is forIf this is right, then the obvious next question is: what does the human node do inside such a system? Leigh, in his essay, addresses the channel — how to widen it so that visual and spatial thought can pass through. The other node, the AI, will keep getting more capable in its own way; that is not in doubt. But what about us?One answer that keeps returning to me is this: at least for now, the distinctive work of the human node, inside a human-AI system, is to govern the dimensionality of information in ways the other node cannot yet quite match. To decide what should be flattened into a one-dimensional sentence and what should be kept multidimensional. To know when a feeling needs to stay a feeling, when a relationship needs to stay a relationship, when a place needs to stay a place. To integrate information, somewhere inside a not-yet-understood mass of protein, in ways that are neither fully reproducible nor fully rational — and to trust that integration anyway.To translate something into a lower dimension, it is worth noting, is not the same as throwing the rest away. It is to choose which face of the multidimensional thing to turn forward, and let the other faces rest in shadow for now. Done well, this is itself a craft. Done carelessly, it is the quiet loss of half of what we know.So part of the human node’s work is to let some things rest where they are, without rushing to translate or transmit them. And, when translation into a lower dimension cannot be avoided, to do it with care and with respect for what is being translated. Much of what design actually is, I have come to believe, lives in these two small disciplines.This shows up, in practice, as a series of small refusals to flatten. A prayer kept ritual rather than scripted. A festival kept embodied rather than streamed. *On the small island where I live, the songs of thanksgiving to the gods are still passed down through recordings of voices, not through written scores — a score would lose what makes the song a song.* A street kept walkable rather than navigable. A friendship kept patient rather than messaged.And inside that work, there is a region that, for the moment at least, no other node in any conceivable system seems able to take over from us. It is the region of intuition, of aesthetic judgment, of ethics held in the body before it is held in argument, and of what anthropologists, following Claude Lévi-Strauss, have called *magical thinking* — not the dismissive sense in which we usually hear those words, but the sense Lévi-Strauss explored: a coherent, structured way of knowing the world that runs alongside scientific rationality rather than beneath it. Flesh-and-blood thinking has access to that region in a way that nothing made of weights and tokens, no matter how high-dimensional, currently does.Some part of our job, then, is to keep doing this strange internal work — converting and recombining information inside ourselves, often in ways we cannot fully explain — and to bring to the channel only what is asked for, only what fits.Photo by Hiroshi SatoTo closeSo — to come back round to where this started — under Leigh’s structural argument I think there is also a quieter story about us. We used to live alongside AI through a thin channel, and that was fine, because we were two separate worlds. Now we are starting, however haltingly, to live inside the same system as it. The prompt is the first part of that arrangement to feel uncomfortable, because narrow channels are wrong for nodes that have begun to handle high-dimensional thought together.The channel will widen — Leigh is already pointing the way. What I find myself most curious about is the smaller, harder question on the other side: what should pass through the wider channel, and what should be kept, lovingly, on our side of it.This essay, of course, is itself a one-dimensional posture. It turns one face of the tangle of AI, information, and design forward, and lets many other faces rest in shadow. I would be grateful for other angles, other foregroundings, other quiet additions — wherever you find yourself reading this. Thank you for reading.The one-dimensional pipe between two high-dimensional minds was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story. UX Collective – Medium